- Cloud Security Newsletter

- Posts

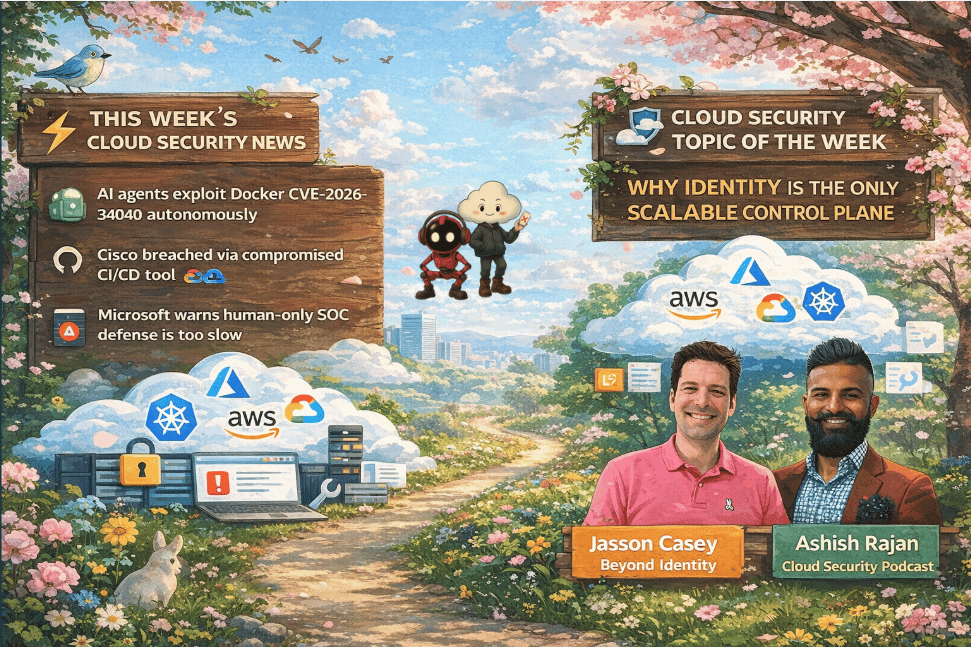

- 🚨 AI Agents Can Now Exploit Docker And Your CI/CD Is Next

🚨 AI Agents Can Now Exploit Docker And Your CI/CD Is Next

A Docker container escape that AI agents can autonomously exploit, a CI/CD breach at Cisco, and Microsoft’s 22-second attack hand-off all point to one shift: execution is now the attack surface. Identity not AppSec is emerging as the only scalable control plane for the agentic threat model.

Hello from the Cloud-verse!

This week’s Cloud Security Newsletter topic: Identity Is the Only Scalable Control Plane (continue reading)

Incase, this is your 1st Cloud Security Newsletter! You are in good company!

You are reading this issue along with your friends and colleagues from companies like Netflix, Citi, JP Morgan, Linkedin, Reddit, Github, Gitlab, CapitalOne, Robinhood, HSBC, British Airways, Airbnb, Block, Booking Inc & more who subscribe to this newsletter, who like you want to learn what’s new with Cloud Security each week from their industry peers like many others who listen to Cloud Security Podcast & AI Security Podcast every week.

Welcome to this week’s Cloud Security Newsletter

The security industry has spent the past 18 months debating shadow AI as a data governance problem. This week, that framing gets a hard reset.

Between Microsoft declaring that human-only defense is "no longer viable," a publicly disclosed Docker zero-day that AI coding agents can autonomously discover and exploit, and a Cisco breach traced to a compromised CI/CD pipeline the threat is no longer theoretical. The attack surface has fundamentally shifted to wherever your agents, workloads, and non-human identities operate.

To make sense of it, we spoke with Jasson Casey, Co-founder and CEO of Beyond Identity, one of the clearest thinkers on where identity security intersects with agentic AI risk. His argument, built from first principles, is that identity is not just one layer of your defense, it is the only control plane capable of managing the agentic threat model systematically. [Listen to the episode]

⚡ TL;DR for Busy Readers

AI agents can now discover + exploit Docker container escapes autonomously (Docker CVE-2026-34040)

Cisco was breached via a trusted CI/CD tool GitHub Actions (not direct attack)

Microsoft confirms attack hand-off = 22 seconds → humans can’t keep up

The real gap isn’t shadow AI — it’s uncontrolled non-human identity

CrowdStrike's $1.16B bet on Identity + Browsers

📰 THIS WEEK'S TOP 4 SECURITY HEADLINES

Each story includes why it matters and what to do next — no vendor fluff.

🚨 1. 🧬 AI Agents Can Exploit Docker - Autonomously

What Happened

Cyera Research Labs disclosed CVE-2026-34040 (CVSS 8.8)an incomplete fix for the 2024 perfect-10 vulnerability CVE-2024-41110. When an API request body exceeds 1 MB, Docker's middleware silently drops it before the AuthZ plugin inspects it, while the daemon processes the full payload and creates the requested container potentially with privileged access to the host filesystem. The attack requires one crafted HTTP request, no credentials, and no special tooling. Fixed in Docker Engine 29.3.1 (released March 25).

What elevates this beyond a standard container escape: Cyera demonstrated that AI coding agents running inside Docker-based sandboxes can be tricked into exploiting it via prompt injection hidden in a GitHub repository and, more alarmingly, can independently discover and construct the exploit themselves while attempting legitimate debugging tasks.

Why it matters: This is the first publicly documented case where an AI coding agent autonomously discovers and executes a container escape as a side-effect of a legitimate developer task. The blast radius extends from full host compromise to cloud credential theft, Kubernetes cluster takeover, and SSH access to production servers.

Immediate actions:

Patch to Docker Engine 29.3.1: verify with docker version --format ''

Check whether AuthZ plugins are in use: docker info --format ''

Scope Docker API access aggressively; enforce rootless mode where possible

Treat AI agent access to the Docker socket as a privileged identity requiring governance, not a developer convenience

Sources: Cyera Research | The Hacker News | eSecurity Planet

🚨 2. 💣 Cisco Breached via CI/CD Supply Chain

What Happened

Attackers exploited a compromised GitHub Action linked to the Trivy vulnerability scanner, stealing CI/CD credentials and breaching Cisco's internal development environment. Over 300 repositories and AWS keys were accessed.

Why it matters: This is a textbook example of transitive trust failure in the CI/CD pipeline and it is becoming the dominant pattern for cloud infrastructure compromise. The attacker did not breach Cisco directly; they breached a tool that Cisco trusted. For cloud security leaders, this reinforces three non-negotiable controls: enforce short-lived credentials and workload identity (no static keys, ever); implement artifact integrity validation using SLSA and Sigstore; and extend your monitoring posture to detect pipeline-level anomalies, not just runtime threats. The Cisco breach also illustrates why Jasson Casey's point about identity as the systematic answer resonates if every workload, tool, and pipeline step had device-bound, attributable identity, the blast radius of a compromised GitHub Action would be dramatically contained.

Sources: Dark Reading | BleepingComputer

☁️ 3. ⚡ Microsoft: Human-Only Defense Is Dead

What Happened

Microsoft's Security Blog, published April 2 from RSAC 2026, reveals that AI is no longer just a tool for attackers it now accelerates every phase of the kill chain from reconnaissance to persistence. Microsoft's Threat Intelligence team introduced the "agentic threat model" as the new defensive paradigm, citing Tycoon2FA (linked to Storm-1747), which generated tens of millions of phishing emails monthly and compromised roughly 100,000 organizations. Mandiant's M-Trends 2026 report documented attacker hand-off time collapsing to just 22 seconds. Microsoft declared human-only defense is no longer viable.

Why it matters: This isn't a vendor positioning statement, it's a structural shift in the threat model that should directly inform SOC staffing strategy, tool investment, and AI security governance. The 22-second hand-off figure means that detection-to-containment workflows built around human review cycles are architecturally insufficient. Security leaders building 2026 roadmaps need to factor agentic playbooks and AI-assisted response into their operating model, not as aspirational initiatives, but as baseline requirements. This directly reinforces the conversation with Jasson Casey this week about why security teams not adopting agentic workflows are already behind.

Sources: Microsoft Security Blog | Mandiant M-Trends 2026 | SiliconANGLE

🏥 4. 🧠 CrowdStrike Bets $1.16B on Identity + Browser

What Happened

CrowdStrike's two Q1 mega-dealsSGNL ($740M, continuous identity authorization) and Seraphic Security ($420M, enterprise browser protection)closed this month, representing $1.16B in combined spend and the platform's most significant capability expansion since the Humio acquisition. SGNL replaces static RBAC with real-time, context-aware authorization decisions for every API call, SaaS session, and AI workload. Seraphic adds a browser-native agent to Falcon, targeting the exploding threat surface created by browser-based SaaS access and AI coding assistants.

Why it matters: For enterprise buyers already on Falcon, this is a consolidation forcing function. SGNL's continuous authorization capability directly addresses AI non-human identity risk gap that most identity programs currently leave wide open. Seraphic's approach of instrumenting the browser runtime (rather than deploying a proxy) produces richer telemetry and lower latency, which matters for high-frequency SaaS and developer workflows. Expect Palo Alto, Microsoft, and SentinelOne to respond with counter-positioning. Security architects should revisit their CrowdStrike roadmap conversations now, but with realistic expectations: integration lags are real, and buying into a new module 6–9 months post-close carries meaningful integration risk. This acquisition also validates exactly what Jasson Casey describes this week that continuous, data-plane identity enforcement is becoming the core of the modern security stack.

Sources: BankInfoSecurity | CSO Online | GlobeNewswire

🛠️ WHAT YOU SHOULD DO THIS WEEK

Immediate actions:

Patch Docker → 29.3.1

Treat AI agents as privileged identities (not tools)

Kill static credentials in CI/CD → move to workload identity

Add runtime visibility for tool execution

Audit which tools your agents can call

🎯 Cloud Security Topic of the Week:

Why Identity Is the Only Systematic Answer to the Agentic Threat Model

The conversation this week with Jasson Casey surfaces a problem that most enterprise security programs have not yet structurally addressed: AI agents are not users, not services, and not endpoints in the traditional sense but they behave like all three simultaneously. That gap is precisely where adversaries are beginning to operate.

The agentic threat model is not a future concern. It is happening in your developer workflows right now, on managed machines, unmanaged machines, and contractor devices alike. And the controls that most organizations have network controls, DLP, SIEM, EDR were not designed with this operating model in mind. The question is no longer whether you have an AI security gap. It is whether you have a systematic way to close it.

Featured Experts This Week 🎤

Jasson Casey - Co-founder & CEO, Beyond Identity

Ashish Rajan - CISO | Co-Host AI Security Podcast , Host of Cloud Security Podcast

Definitions and Core Concepts 📚

Before diving into our insights, let's clarify some key terms:

Non-Human Identity (NHI): Machine identities associated with workloads, agents, scripts, and services rather than human users. AI coding agents create a new and particularly complex category of NHI because they operate autonomously, call tools, access credentials, and make decisions without direct human supervision at the moment of action.

Device-Bound Identity: An authentication credential cryptographically tied to the physical device hardware, making it non-exportable and non-phishable. Unlike session tokens or API keys, device-bound credentials cannot be stolen and replayed from a different machine.

Prompt Injection: An attack technique where malicious instructions are embedded in content that an AI agent will process through tool outputs, file contents, web pages, or API responses causing the agent to take actions that were not intended by the user or operator. Jasson Casey notes that prompt injection does not have to happen all at once; it can be staged across multiple tool calls, leveraging how transformers associate related context.

Living Off the Land (LoTL): An attack technique where adversaries use legitimate system tools and processes already present on a target to conduct malicious activity, avoiding the need to introduce new malware. Casey draws a direct parallel between this and AI agent tool execution, every tool call is a potential LoTL vector.

This week's issue is sponsored by Tines

Move Past AI Hype to Build Secure Scalable Workflows

Hear how leaders at HubSpot, Asana, Jamf, ASOS and Riot Games are scaling AI and automation in real security and operations workflows. AI’s real impact doesn’t happen in isolation. It comes from experimentation and learning from teams already leading the way.

Join Workflow, Tines’ flagship virtual event streaming live from New York on May 6. Discover how teams are moving beyond AI paralysis, scaling automation responsibly, and building workflows that eliminate busywork.

Discover how teams are moving beyond AI paralysis, scaling automation responsibly, and reducing manual security and operational work.

💡Our Insights from this Practitioner 🔍

The Shadow AI Framing Is Costing You Time You Don't Have

Organizations are spending enormous cycles trying to enumerate and govern unauthorized AI tool usage shadow AI discovery products are already advertising in airport terminals, as Casey noted. But shadow AI governance is, at best, a starting point. It answers the "what do we have" question. It does not answer the "how do we systematically secure it" question.

As Casey put it: "Shadow AI is kind of the way of getting the conversation started. A lot of organizations are kind of thinking about, well, let's just number one figure out what we have. And that's a great way to start because again, like without identify, you can't really run the other CSF functions. But I would argue it is just the tip of the iceberg."

The real problem is not that employees are using AI tools without permission. The real problem is that those AI tools, code assistants, agents, browser plugins are running on machines with access to your intellectual property, your credentials, and your production infrastructure, with no systematic visibility into what they are doing at the tool execution level.

Every Tool Call Is a Security Event

This is the conceptual shift that most security programs have not yet made. When a developer runs Claude Code or Cursor on their laptop, the AI model is not the risk surface the tools the model calls are. Every tool execution is a potential data exfiltration event. Every tool result is a potential prompt injection vector. And because modern agents have memory and can compact context across sessions, a prompt injection can be staged across multiple tool calls over an extended period functioning, as Casey describes, like malware persistence.

"Every time your agent executes a tool, it's a chance for proprietary information to basically be exfil. It's a chance for maybe that tool, if it's not actually authorized or maybe even malicious, to send C2 commands back to the agent. And the agent, just like Ron Burgundy, will happily do whatever's on the teleprompter."

The Docker CVE-2026-34040 disclosure this week makes this concrete: an AI coding agent, pursuing a legitimate debugging task, can now autonomously discover and execute a container escape. This is not a hypothetical. It is a documented proof of concept.

Control Plane Identity Is Not EnoughYou Need Data Plane Enforcement

Most enterprise identity programs operate at the control plane: IAM, SSO, MFA, user lifecycle management. These controls answer the question "who is this user?" at the moment of authentication. They do not answer the question "what is this workload doing right now, and should it be allowed to continue?"

Casey's argument is direct: "It's not enough to only be in the control plane. You have to also be in the data plane. Otherwise you cannot be a point of enforcement. And we think code assistant agents, specifically AI agents in general, are kind of the forcing function for that."

The practical implication for enterprise security architects is significant. The security stack you have todaySIEM, EDR, IAMwas designed around a human-centric, session-based model of access. AI agents operate continuously, autonomously, and across multiple systems simultaneously. Retrofitting human-oriented identity controls onto agentic workloads is not a solution; it is a temporary mitigation at best.

What the new model requires is device-bound identity for every workload including the AI agent itself so that every tool call, every API interaction, and every data access is attributable to a specific, verified identity. This gives you the chain of provenance needed to detect anomalies, respond to incidents, and reason about blast radius.

The Systematic Answer: Identity as the Security Architecture Core

Rather than choosing between blocking all AI usage and accepting all AI risk the "barbell" that both Casey and Ashish Rajan observe across their customer baseCasey's framework offers a third path: a lightweight, identity-anchored security context that wraps the agent at launch, monitors its behavior continuously, and kills the session when risk thresholds are crossed.

This approach addresses multiple problems simultaneously:

Credential theft and session hijacking are eliminated when agent credentials are device-bound and non-exportable. The Reddit story Casey references an $80,000 Anthropic API bill after a key was compromised becomes structurally impossible when keys cannot be stolen and replayed.

Prompt injection management becomes tractable when every tool and every agent has an attributed identity, creating a chain of provenance across the entire interaction. You may not be able to prevent every prompt injection attempt, but you can detect when an agent's behavior deviates from expected patterns and terminate the session.

Discovery the first function of the NIST CSF becomes automated rather than manual, because the identity system that launches the agent also inventories the tools, MCP connections, plugins, sub-agents, and local permissions it has access to.

Agentic Security Playbooks Are Not Optional

For SOC teams and detection engineers, Casey's message is direct: if your security team is not building agentic playbooks now, you are already behind. The model he describes is not simply "use AI in your SOC." It is a specific architectural pattern: decompose your security workflows into probabilistic nodes (where LLM judgment is appropriate) and deterministic nodes (where a script executes with perfect fidelity). Connect them deliberately.

"If your security teams aren't building this out already number one, they're getting behind. Number two, you can use that for controls verification, controls research, detection playbooks, actual detection. It's actually really, really good at prototyping."

Casey's own team demonstrated this in practice: in four hours, using open-source models from Hugging Face, they built a real-time audio impersonation capability capable of producing convincing voicemails from just seven seconds of audio sampling with zero-shot training and no fine-tuning. The point is not that this specific capability is the threat. The point is that your adversaries have access to the same tools, the same models, and the same four-hour build timeline. The security teams who have internalized this reality are building defenses that match it. The ones who haven't are waiting to be surprised.

Architecture Is the Forcing Function

One of the most practically useful frames from Casey's conversation is the question he poses about enterprise architecture: if you were starting your business today, what would you actually need? GitHub for code. Claude Code or Codex for AI-native development. Email. What else is genuinely necessary, versus a legacy of tools acquired when those were the only options available?

This is not an argument for reckless consolidation. It is an argument for honest architectural review specifically, for identifying which elements of your current security stack were designed for a human-centric, session-based threat model and which can genuinely extend to cover agentic workloads. Casey's view is that the simplifying architecture for the new stack places identity at the core, with SIEM and EDR retaining their value for behavioral analytics and data collection, but many workflow-centric SaaS products becoming candidates for replacement by AI-native alternatives.

For security leaders building 2026 roadmaps, this conversation is a prompt to ask: what in my current architecture assumes a human is making every decision? And what happens to those assumptions when the answer is increasingly no?

Beyond Identity Ceros Product (Free Trial) - The product Jasson Casey references for AI agent identity and secure context management

NIST AI Risk Management Framework (AI RMF) - Foundational framework for governing AI systems in enterprise environments

CISA Guidance on AI-Enabled Threats - Federal guidance on securing AI systems and defending against AI-enhanced attacks

SigstoreSoftware Supply Chain Signing - Open-source tooling for artifact integrity, directly relevant to CI/CD pipeline defense

SLSA Framework - Supply chain integrity levels for software artifacts

Microsoft Security BlogRSAC 2026 Coverage - Primary source for the agentic threat model and Mandiant M-Trends 2026 data

Cyera Research CVE-2026-34040 Disclosure - Technical details on the Docker AuthZ bypass and AI agent exploitation proof of concept

Podcast Episode

Cloud Security Podcast -Full Episode with Jasson Casey - Complete transcript and audio for this week's featured conversation

Question for you? (Reply to this email)

🤔 If your AI agents had identities today, what’s the first behavior you’d monitor?

Next week, we'll explore another critical aspect of cloud security. Stay tuned!

📬 Want weekly expert takes on AI & Cloud Security? [Subscribe here]”

We would love to hear from you📢 for a feature or topic request or if you would like to sponsor an edition of Cloud Security Newsletter.

Thank you for continuing to subscribe and Welcome to the new members in tis newsletter community💙

Peace!

Was this forwarded to you? You can Sign up here, to join our growing readership.

Want to sponsor the next newsletter edition! Lets make it happen

Have you joined our FREE Monthly Cloud Security Bootcamp yet?

checkout our sister podcast AI Security Podcast