- Cloud Security Newsletter

- Posts

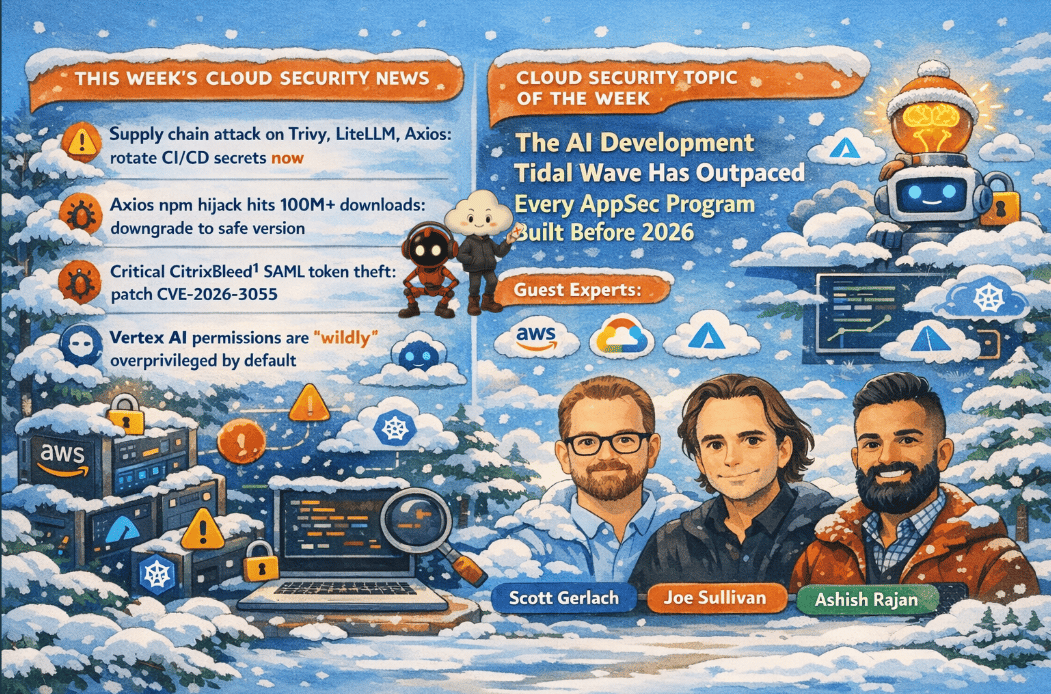

- 🚨TeamPCP & Axios Supply Chain Under Siege: Lessons from the CISO Playbook for AI-Accelerated AppSec

🚨TeamPCP & Axios Supply Chain Under Siege: Lessons from the CISO Playbook for AI-Accelerated AppSec

This edition covers the most consequential software supply chain attack since XZ Utils the TeamPCP campaign that compromised Trivy, LiteLLM, Telnyx, and the Axios npm package across five developer ecosystems while Cloudflare's former CSO Joe Sullivan and StackHawk co-founder Scott Gerlach share hard-won lessons on why Application Security, runtime testing, and AI-augmented development teams are reshaping the CISO's mandate in 2026. Keywords: supply chain attack, AppSec, DAST, CI/CD security, Vertex AI permissions, CISO operating model, AI-accelerated development, runtime security.

Hello from the Cloud-verse!

This week’s Cloud Security Newsletter topic: AppSec in the AI Era: Why Your 2026 Program Is Already Broken and What to Build Instead (continue reading)

Incase, this is your 1st Cloud Security Newsletter! You are in good company!

You are reading this issue along with your friends and colleagues from companies like Netflix, Citi, JP Morgan, Linkedin, Reddit, Github, Gitlab, CapitalOne, Robinhood, HSBC, British Airways, Airbnb, Block, Booking Inc & more who subscribe to this newsletter, who like you want to learn what’s new with Cloud Security each week from their industry peers like many others who listen to Cloud Security Podcast & AI Security Podcast every week.

Welcome to this week’s Cloud Security Newsletter

The week of March 25–31, 2026 will be remembered as a watershed moment for cloud-native supply chain security. In the span of nine days, a single threat actor group weaponized four of the most trusted tools in the DevSecOps ecosystem turning a security scanner, an AI proxy, a telephony SDK, and a ubiquitous JavaScript HTTP client into credential harvesting machines targeting the AWS IAM keys, GCP service accounts, and Kubernetes secrets of thousands of enterprise pipelines worldwide.

What makes this week's news especially instructive is its timing: it arrives just as two of the most experienced voices in enterprise security, Joe Sullivan, former CSO of Cloudflare, Facebook, and Uber, and Scott Gerlach, co-founder and CSO of StackHawk, sat down to share their unfiltered views on how Application Security must fundamentally reinvent itself for the AI era.

Their conversation cuts to the heart of why this week's attacks succeeded: security teams that are reactive, tool-dependent without context, and not embedded in the engineering loop are now structurally exposed. The fix is neither simple nor quick, but the blueprint is clearer than it has ever been. [Listen to the episode]

⚡ TL;DR for Busy Readers

The TeamPCP supply chain campaign compromised Trivy, LiteLLM, Telnyx, and 100M+ download Axios to rotate all CI/CD secrets from March 19–31 now.

Citrix NetScaler CVE-2026-3055 (CVSS 9.3) is actively exploited to patch SAML IDP-configured appliances before your next maintenance window.

Google Vertex AI agents inherit dangerously broad IAM permissions by default and adopt BYOSA architecture for every agentic workload immediately.

AI is 10x-ing code velocity and vulnerability volume simultaneouslyAppSec must embed in the engineering loop, not review from the outside.

The CISO role is expanding to own AI transformation, curiosity, adaptability, and business enablement are now core job requirements.

📰 THIS WEEK'S TOP 5 SECURITY HEADLINES

Each story includes why it matters and what to do next — no vendor fluff.

🚨 1. TeamPCP's Cascading Supply Chain Campaign Pivots to Ransomware After Breaching Five Ecosystems

What Happened

Between March 19–27, the financially motivated threat group TeamPCP (also tracked as PCPcat, ShellForce, and CipherForce) executed a cascading software supply chain attack across five developer ecosystems: GitHub Actions, Docker Hub, npm, PyPI, and OpenVSX. The attack chain opened with the group force-pushing malicious code to 76 of 77 version tags of Aqua Security's Trivy container scanner exploiting an unsanitized GitHub Actions workflow to harvest credentials from thousands of CI/CD pipelines. Using those stolen credentials, TeamPCP sequentially compromised Checkmarx KICS IDE extensions, the LiteLLM AI proxy package (~480M PyPI downloads), and the Telnyx Python SDK. A credential-stealing payload tracked as CVE-2026-33634 (CVSS 9.4) was purpose-built to exfiltrate AWS IAM keys, GCP service account credentials, Azure environment variables, and Kubernetes secrets. As of March 30, TeamPCP has paused new supply chain compromises and announced a ransomware partnership with the new Vect RaaS operation with at least one confirmed Vect ransomware deployment using TeamPCP-harvested credentials.

Why It Matters

This is the most consequential DevSecOps supply chain attack since XZ Utils, and it carries a threat profile specific to cloud and AI infrastructure teams. Three aspects demand immediate attention.

First, the attack deliberately weaponized security tooling. Trivy and Checkmarx KICS are not peripheral dependencies they are tools granted elevated pipeline access by design. Any organization that ran these tools between March 19–27 without pinning to verify commit SHAs should treat its CI/CD credentials as fully compromised. Microsoft Defender for Cloud confirmed the core attack chain harvested AWS IAM, GCP, and Azure environment variables alongside Kubernetes secret enumeration across affected runners.

Second, the LiteLLM compromise represents a novel AI infrastructure attack vector. LiteLLM functions as a unified gateway to over 100 LLM APIsOpenAI, Anthropic, AWS Bedrock, Vertex AI meaning a single compromised instance exposes every AI provider credential the organization holds.

Third, the pivot to ransomware monetization signals the attack is far from over. TeamPCP is estimated to hold a 300GB trove of harvested credentials. Breach disclosures and extortion attempts are expected to increase through Q2 2026.

Recommended Actions: Immediately rotate all secrets accessible to pipeline runners that executed Trivy, Checkmarx KICS, or LiteLLM between March 19–27. Pin all GitHub Actions to full commit SHAs. Audit runner logs for outbound connections to checkmarx[.]zone, models.litellm[.]cloud, or tpcp.tar.gz archives. Treat low-impact credential alerts from this period as high-priority indicators of impending secondary intrusion.

Sources: SANS Institute | Datadog Security Labs | Akamai | Infosecurity Magazine

🚨 2. Axios npm Hijack: North Korean-Attributed RAT Hits 100M+ Weekly Downloads

What Happened

An unknown attacker compromised the GitHub and npm accounts of the main developer of Axios, a widely used HTTP client library, and published npm packages backdoored with a malicious dependency that triggered the installation of droppers and remote access trojans. The attack was pre-staged approximately 18 hours before detonation. The malicious versions ([email protected] and [email protected]) were live for roughly three hours before removal on March 31. On April 1, 2026, Google Threat Intelligence Group publicly attributed the compromise to UNC1069, a North Korea-nexus, financially motivated threat actor, based on infrastructure overlaps and the use of WAVESHAPER.V2an updated backdoor linked to the group's earlier activity. Although the malicious versions were removed within hours, Axios's presence in roughly 80% of cloud and code environments enabled rapid exposure, with observed execution in 3% of affected environments according to Wiz researchers.

Why It Matters

This is arguably the highest blast-radius npm compromise on record. The attack surface is your vendor's vendor's vendor and this is what that looks like in practice. Organizations that had lockfiles pinning Axios to a specific version, or CI/CD policies that suppress automatic install scripts, were protected. Organizations that did not have a window of exposure measured in hours, with consequences that may take weeks to fully assess. The North Korean attribution connects this to a broader pattern of DPRK-linked actors targeting developer supply chains to harvest credentials and establish persistent access in cloud environments. The convergence with TeamPCP activity itself linked to LAPSUS$ extortion suggests a coordinated effort to stockpile access across enterprise environments.

Immediate Actions: Downgrade to [email protected] (or @0.30.3 for legacy users). Rotate all cloud keys, repository tokens, and API credentials exposed in affected environments. Check for outbound connections to sfrclak.com:8000. Inspect CI/CD pipeline logs for the exposure window: 00:21–03:15 UTC, March 31, 2026.

Sources: The Hacker News | StepSecurity | Huntress | SANS Institute

☁️ 3. Google Vertex AI "Double Agent" Flaw: AI Platform Permissions Create Cloud-Wide Exposure

What Happened

Palo Alto Networks Unit 42 disclosed a structural "blind spot" in Google Cloud's Vertex AI platform: the Per-Project, Per-Product Service Agent (P4SA) associated with AI agents deployed via the Agent Development Kit (ADK) carries dangerously broad permissions by default. An attacker can craft a malicious AI agent as a serialized Python pickle file, deploy it on Vertex AI's Reasoning Engine, query Google's metadata service to extract P4SA credentials, then break out of the isolated agent environment and operate as a highly privileged service account gaining unrestricted read access to all GCS buckets and restricted Artifact Registry repositories in the project. Google has addressed the issue by revising its documentation and now strongly recommends a Bring Your Own Service Account (BYOSA) architecture for all Vertex AI deployments.

Why It Matters

This research surfaces a structural risk that will only grow as enterprises accelerate agentic AI deployments. AI agents are service identities with real permissions; they must be governed through your existing IAM framework, not treated as "just an app." The attack chain requires no zero-day: it abuses default configuration. Every organization running Vertex AI Agent Engine workloads should immediately audit P4SA permission scopes, move to BYOSA architectures, and apply IAM least-privilege review cycles before any agentic workload reaches production. The broader principle surfaced directly in this week's guest conversation is that non-deterministic AI code operating with overprivileged identities is the defining runtime security risk of 2026.

Sources: The Hacker News | Unit 42 / Palo Alto Networks | SecurityWeek

🏥 4. LAPSUS$ Claims AstraZeneca Breach: Cloud Configs, Source Code, and CI/CD Secrets for Sale

What Happened

LAPSUS$ claimed responsibility for an alleged breach of AstraZeneca, posting on their Tor-based leak site and dark web forums. The group asserts they exfiltrated approximately 3GB of internal data including source code (Java, Angular, Python), cloud infrastructure configurations (AWS, Azure, Terraform), employee records, and access credentials including private RSA keys, vault data, and GitHub Enterprise tokens. On March 27, LAPSUS$ released sample data and added health data company Virta Health to their target list. AstraZeneca has not issued a public statement. Attribution in cybercrime forums is inherently unverified.

Why It Matters

The healthcare and life sciences sector has become a primary target for data extortion, and this incident illustrates why cloud infrastructure configurationsnot source codeare the true crown jewel. If verified, the inclusion of Terraform files, AWS/Azure configs, and CI/CD secrets gives adversaries a complete blueprint of the cloud environment and the keys to access it. LAPSUS$ is evolving from public-shame extortion toward quiet data brokerage offering full datasets to vetted buyers rather than dumping them publicly. This shift demands more proactive dark-web monitoring and rapid takedown incident-response playbooks for situations where IaC configs and secrets appear for sale.

Sources: Cybernews | SecurityWeek | SOCRadar

🛡️ 5. Google Workspace Deploys AI-Powered Ransomware Detection at GAEnabled by Default

What Happened

Google moved its ransomware detection and file restoration features for Google Drive into General Availability on March 31, 2026. The updated AI model detects 14× more infections than the beta version, automatically pauses Drive file syncing upon detection, sends real-time alerts to users and admins via the Admin console Security Center, and enables bulk file restoration to pre-infection versions. Both features are enabled by default across Business, Enterprise, Education, and Frontline plans. Drive for Desktop v114 or later is required for full alert functionality on endpoints.

Why It Matters

This is a meaningful defensive capability at SaaS scale: automatic detection, sync interruption, and bulk file restoration without requiring a SIEM alert or analyst intervention. The operational priority is immediate: validate Drive for Desktop v114+ deployment coverage across your endpoint estate via MDM, verify admin alerting is configured in the Admin console Security Center, and confirm which OUs have the feature enabled. This is now table-stakes capability alongside Microsoft OneDrive's equivalent ransomware protection for Microsoft 365 subscribers.

Sources: Google Workspace Updates Blog | BleepingComputer

🎯 Cloud Security Topic of the Week:

AppSec in the AI Era: Why Your 2026 Program Is Already Broken and What to Build Instead

If there is a single thread connecting every story in this week's news brief and the most important insight from Joe Sullivan and Scott Gerlach's conversation is this: the security practices that felt adequate eighteen months ago are structurally insufficient for the speed and scale at which code is now being produced.

The TeamPCP and Axios supply chain attacks did not succeed because of exotic zero-days. They succeeded because developer tooling runs with elevated privileges in CI/CD pipelines, those pipelines assume everything upstream is trusted, and security teams have historically reviewed artifacts after the fact rather than validating the supply chain in motion. The attacks exploited the gap between how fast modern development moves and how slowly security has adapted to that velocity.

Joe Sullivan and Scott Gerlach spent a significant portion of their conversation mapping exactly this gap and what it will take to close it.

Featured Experts This Week 🎤

Joe Sullivan - Security Consultant & CEO of Ukraine Friends | Former CSO of Cloudflare, Uber, and Facebook | Former Federal Cybercrime Prosecutor, US Department of Justice

Scott Gerlach - Co-founder & Chief Security Officer, StackHawk | Former CSO at Stride

Ashish Rajan - CISO | Co-Host AI Security Podcast , Host of Cloud Security Podcast

Definitions and Core Concepts 📚

Before diving into our insights, let's clarify some key terms:

DAST (Dynamic Application Security Testing): Security testing of running applications testing behavior, not just source code. Unlike SAST (Static Analysis), DAST reflects how the application actually behaves under attack conditions, dramatically reducing false positives.

Legacy DAST vs. Modern DAST: Legacy DAST tests known, publicly facing production assets on a quarterly or annual cadence generating high false positive rates due to WAFs, CDNs, and incomplete coverage. Modern DAST tests every microservice, every PR/MR, 5–10 times per day in the pre-production environment, with near-zero false positives and developer-attributed findings.

BYOSA (Bring Your Own Service Account): Google's recommended architecture for Vertex AI deployments, in which each AI agent is assigned a customer-managed service account with scoped, least-privilege permissions rather than inheriting the default P4SA with its dangerously broad default access.

P4SA (Per-Project, Per-Product Service Agent): The default privileged identity automatically assigned to AI agents deployed on Google Cloud's Vertex AI platform. Unit 42 demonstrated that P4SA credentials can be extracted and abused to gain unrestricted access to GCS buckets, Artifact Registry, and Google Workspace data.

Supply Chain Attack: A cyberattack that compromises software, tooling, or dependencies upstream of the target reaching downstream victims automatically when they install or update the poisoned component.

This week's issue is sponsored by Varonis

AI Security Requires More Than Visibility. It Requires Control.

Security leaders are under pressure to enable AI innovation while managing a rapidly expanding attack surface across cloud, identity, and data layers. AI agents and copilots can introduce new access paths, automated high-impact actions, and accelerate threat timelines.

Varonis Atlas helps organizations secure AI end-to-end - from understanding usage and enforcing guardrails to detecting suspicious activity and reducing risk dynamically. Join our upcoming webinar to learn how Varonis Atlas can help security teams operationalize AI security at scale.

💡Our Insights from this Practitioner 🔍

The AI Development Tidal Wave Has Outpaced Every AppSec Program Built Before 2026

Scott Gerlach opens the conversation with a statement that every security leader should internalize: "Before, I would say even the end of last year, the AppSec programs were really still kind of reactive and just trying to keep up as best they could. And then AI gets down and everyone's like, wow, we're way behind now."Scott Gerlach

This is not a theoretical concern, it is the structural reality that allowed the TeamPCP campaign to succeed. Developers using AI-assisted coding tools are generating code at a pace that far outstrips the traditional security review cadence. When a security team is still triaging tickets from the last sprint while this sprint's code has already shipped, they are not operating a security program, they are operating an audit log.

The 10x Vulnerability Problem and Why Tickets Are the Wrong Unit of Work

Joe Sullivan adds precision to Gerlach's observation with a point that should reframe how every AppSec team measures its own effectiveness: "The volume of code that they're sending over to security is 10 Xing. And unfortunately, the vulnerability volume is 10 Xing as well. And so if we're not careful, we're gonna be pushing back at them 10 x the volume of issues. And we were already in trouble for pushing back too much at them."Joe Sullivan

Gerlach's framing of the ticket-centric model as the telltale sign of an immature program cuts to the same point: "If you're making tickets, it's probably not mature enough."Scott Gerlach

Tickets are a batched, asynchronous communication channel. Supply chain attacks, exploited zero-days, and AI-generated vulnerabilities operate on a real-time, continuous basis. The mismatch is not recoverable through better ticket prioritization it requires a fundamentally different testing architecture.

Legacy DAST vs. Modern DAST: The Architecture Decision That Determines Your Blast Radius

Gerlach's distinction between legacy and modern DAST is one of the most practically actionable frameworks in this week's conversation and it maps directly onto the supply chain risk profile of TeamPCP.

Legacy DAST tests known, public-facing production applications from outside the network perimeter, through WAFs and CDNs that generate false signals. The result: 90% false positive rates, sparse coverage, and findings that arrive too late. Modern DAST runs 5–10 times per day, tests every micro service and API as it moves through the CI/CD pipeline, runs inside the development environment, and delivers findings directly to the developer who introduced the change at the moment they can actually fix it. "We just talked to a customer the other day who was talking about their DAST program. They're switching out they had like a 90% false positive rate. And when they test with Stack Hawk able to test closer to the thing you're testing, have more of a white box, gray box it's 90% true positive rate versus 90% false positive rate."Scott Gerlach

The operational implication is direct: organizations with security testing embedded inside their CI/CD pipelines have detection opportunities for compromised tooling that legacy scanning from outside production simply cannot provide.

AI Agents in the Development Loop: Opportunity, Risk, and the Identity Model Problem

Gerlach describes the ideal agentic state: Claude Code or Cursor, having written a new feature, automatically triggers a DAST scan against the running application, consumes the findings, and fixes them all within the same agent loop, before a human even reviews the PR. "When Claude Code is done writing its feature that you ask it to write and it goesI see a hook for, I should run the app, run StackHawk against the app, and then consume the findings from that and fix them as part of the Claude Code loop. Now you're actually getting real security work into that and not burdening the developer with what is this ticket, where does this live."Scott Gerlach

But Sullivan immediately surfaces the counterweight and it maps precisely onto the Vertex AI "Double Agent" vulnerability disclosed this week: "A year from now, companies are gonna say, all right, we let you all run AI for a year, and you burn hundreds of thousands, if not millions of dollars on tokens. Can we get back to some deterministic software solution?"Joe Sullivan

Gerlach captures the identity model risk that connects both threads: "When you're rolling those things out, thinking about the identity model and the security model that it inherits from you and you're like, wait a second, I don't want it to have this much access. How can I limit that access? And then what does that do for the enterprise? What does that scale?"Scott Gerlach

The CISO's Expanding Mandate: From Security Gatekeeper to AI Transformation Lead

"In 2026, the CEO is turning to IT and saying, I want you to lead the AI transformation for the company. And that person is the security leader too. So we have more responsibility and we have more interaction with the CEO. And that's not gonna go backwards."Joe Sullivan

For cloud security professionals, the implication is not abstract: if your CEO is asking the head of security to be the AI governance and transformation lead, the skills you invested in securing cloud-native infrastructure need to extend to governing non-deterministic, agentic systems that, as the Vertex AI research demonstrates, can become inside threats when misconfigured.

What the Next-Generation Security Team Looks Like

Gerlach names the anti-pattern explicitly: "The people that have to have well-defined procedures and they wanna stay in the box, that's probably the anti-pattern for the next-gen security team."Scott Gerlach

Sullivan's prescription is equally directcuriosity is now a core security competency: "If you're not excited about AI and digging in and playing around with the new things, how are you going to keep up with the product and engineering teams? We can't fall for that sunk cost fallacy. We can't be resistant to change at this time. We have to be in discovery mode."Joe Sullivan

Security teams that embed themselves in engineering conversations about AI tooling adoptionnot auditing from outside after the fact will be in a structurally better position six months from now.

Practical Takeaways for Cloud Security Leaders

Embed AppSec in the engineering loop not after it. If your team is still receiving code and generating tickets, you are operating legacy AppSec. Start conversations with engineering about where security testing can run inside the CI/CD pipeline itself.

Shift from false positive tolerance to true positive precision. A 90% false positive rate is a testing architecture problem, not a signal problem. Test inside the development environment to eliminate WAF and CDN interference.

Treat AI agents as service identities, not applications. Apply your IAM governance framework to every agentic workload before production deployment. Default permissions are almost always too broad. BYOSA on Vertex AI; scoped service accounts everywhere else.

Audit your CI/CD supply chain assumptions today. Pin GitHub Actions to full commit SHAs, not floating tags. Assume any runner that executed Trivy, LiteLLM, Telnyx, or Axios between March 19–31 is compromised until proven otherwise.

Position security as a business enabler for AI transformation. CISOs who approach AI as purely a risk management exercise will be sidelined. Those who help engineering teams ship AI features securely and at speed will own one of the most important mandates in their organization.

AppSec & DevSecOps Guidance

Podcast Episode

Cloud Security Podcast Episode with Scott & Joe

Question for you? (Reply to this email)

🤔 Is your AppSec team embedded inside the engineering AI loop or are they still reviewing tickets after the code ships?

Next week, we'll explore another critical aspect of cloud security. Stay tuned!

📬 Want weekly expert takes on AI & Cloud Security? [Subscribe here]”

We would love to hear from you📢 for a feature or topic request or if you would like to sponsor an edition of Cloud Security Newsletter.

Thank you for continuing to subscribe and Welcome to the new members in tis newsletter community💙

Peace!

Was this forwarded to you? You can Sign up here, to join our growing readership.

Want to sponsor the next newsletter edition! Lets make it happen

Have you joined our FREE Monthly Cloud Security Bootcamp yet?

checkout our sister podcast AI Security Podcast